Most websites don't need a framework

A typical website loads over half a megabyte of JavaScript, and nearly half goes unused. We explain where that weight comes from, why most sites don't need it, and demonstrate with a real example: 94 pages generated in 3 seconds, no framework, no server.

The speed paradox

The web industry has turned building a website into an engineering problem. Frameworks, bundlers, transpilers, runtimes: layer upon layer of technology that promise to make things faster and easier. But the data says otherwise.

According to the Web Almanac 2024 (the annual reference report on the state of the web), a typical website loads 558 KB of JavaScript. Of those, nearly half (44%) go unused during page load. These are bytes that travel, decompress, parse and execute without contributing anything visible.

And here comes the paradox: an HTML document with CSS renders at practically network speed. The browser receives it and displays it. JavaScript, on the other hand, costs at least three times more to process per byte than HTML or CSS, according to analysis by Alex Russell, engineer on the Chrome project at Google. Every kilobyte of JavaScript we add to a page penalises load speed three times more than the same kilobyte in any other format.

The weight of frameworks

JavaScript frameworks (tools like React, Next.js, Angular or Vue that structure how a web page is built) were created to solve real problems: managing complex applications with heavy interaction, like a document editor or a real-time dashboard. The problem is they have become the default choice for everything, including sites that are essentially documents: a corporate website, a catalogue, a blog.

The result is measurable. The Web Almanac Jamstack 2024 chapter compares the JavaScript shipped by sites built with different tools, and the differences are enormous: a prerendered site built with Next.js (one of the most popular frameworks) ships 3.5 times more JavaScript than an equivalent site built with Astro, a generator designed to ship the bare minimum. And both are classified as "static".

For context: Alex Russell's blog loads in 1.2 seconds, with 120 KB of total resources, of which only 8 KB are JavaScript. A typical website loads 558 KB of JavaScript alone. The difference is not about technology: it is about decisions.

How many websites actually need all this?

42% of the world's websites run on WordPress. 29% use no content management system at all. The vast majority of websites are, in essence, collections of pages that rarely change: a restaurant that updates its menu once a month, a law firm that doesn't touch its site for a year, a shop that adds products once a week.

Despite this, 94.5% of websites are classified as dynamic (meaning every visit generates the page from scratch on the server). Only 0.5% are purely static and 5% hybrid. But among the world's 10,000 highest-traffic sites, 12% are already static or hybrid, and that figure has grown 67% in a single year. Prerendered sites weigh, on average, 43% of what their dynamic equivalents weigh.

The question is straightforward: how many of those dynamic sites actually need to be?

The alternative: generated HTML and programmatic SEO

The idea is simple: if you already have the data (products, services, properties, images), a program can read it and generate every HTML page you need. No framework in the browser, no permanent server processing requests, no daily maintenance. This strategy is known as programmatic SEO: instead of writing content by hand for every page, a program combines a template with your data and produces hundreds or thousands of pages, each targeting a specific search people make on Google.

The benefits are straightforward:

- Scale without manual effort: a catalogue of 500 products generates 500 indexable pages without anyone writing them one by one.

- Long-tail keywords: each page targets very specific searches ("dollar to euro exchange rate today", "3-bedroom flat in Gràcia") where there is less competition and higher purchase intent.

- Low acquisition cost: once the generator is set up, the cost of creating page 501 is practically zero.

- Centralised maintenance: if you change the template, every page updates. If the data changes, the pages regenerate on their own.

It is neither new nor experimental. Some of the highest-traffic websites in the world use it:

- Wise (the international transfer service) generates 14,888 currency conversion pages. Every currency pair (dollars to euros, rupees to pesos...) has its own page with real exchange rates, bank comparisons and the ability to make the transfer directly. 4.7 million organic visits per month.

- Zapier (the automation platform) generates over 800,000 pages showing integrations between products. Each page includes concrete workflows and lets you activate them. 306,000 organic visits per month.

- Nomadlist generates 25,873 city pages for digital nomads, each with internet speed data, temperatures, cost of living and languages. 41,200 organic visits per month.

The key is not the number of pages. The key is that behind every page there is real, relevant data. Without that, it is junk. Google itself has warned: "programmatic SEO is often a fancy banner for spam" (John Mueller, Google Search Advocate).

You don't need to be Wise to take advantage of it. Any business with an organised catalogue already has what it takes.

A real example: 94 pages in 3 seconds

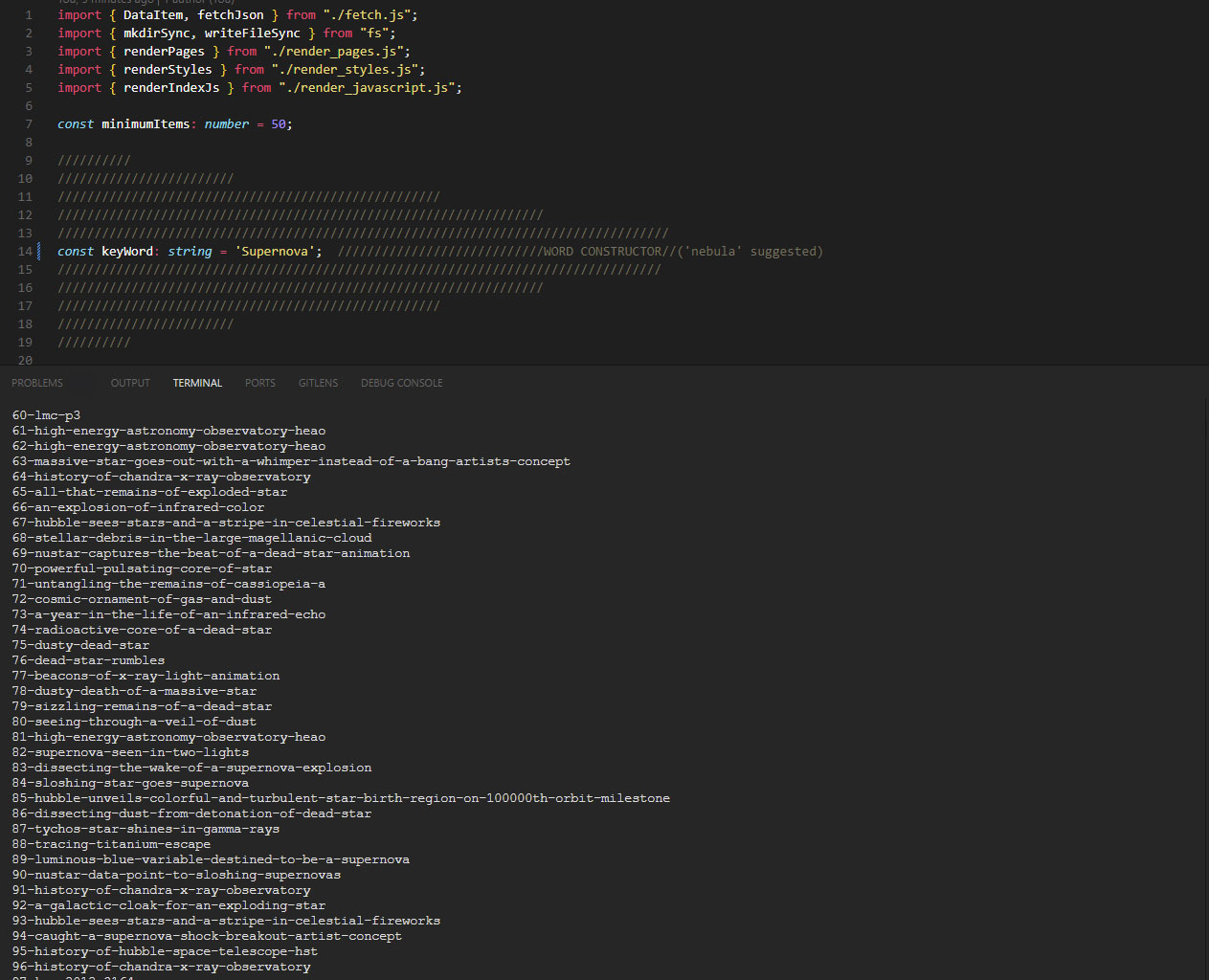

To illustrate the concept, we built a generator that does exactly this. About 200 lines of TypeScript, run with Bun (a runtime that executes JavaScript programs outside the browser).

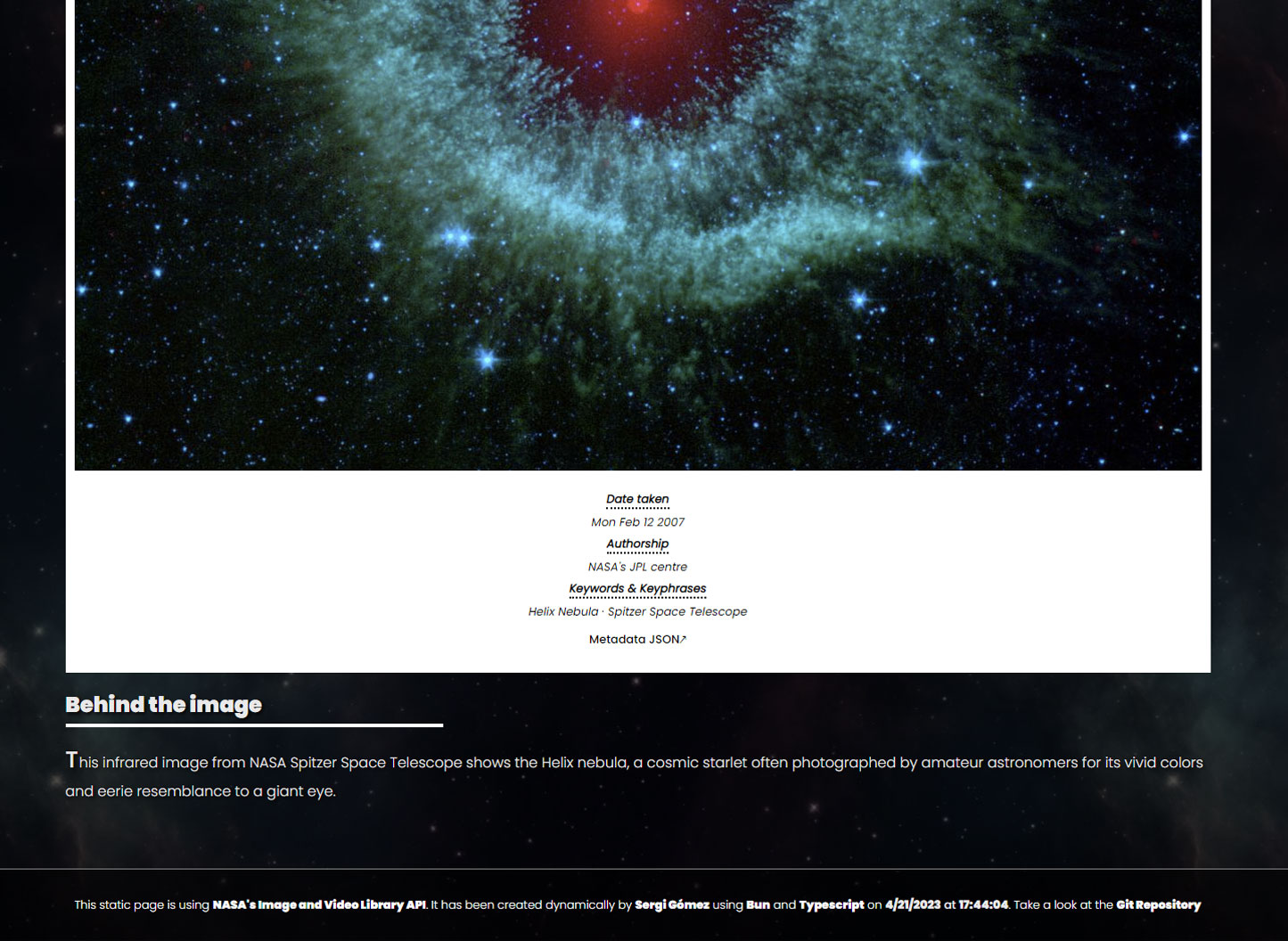

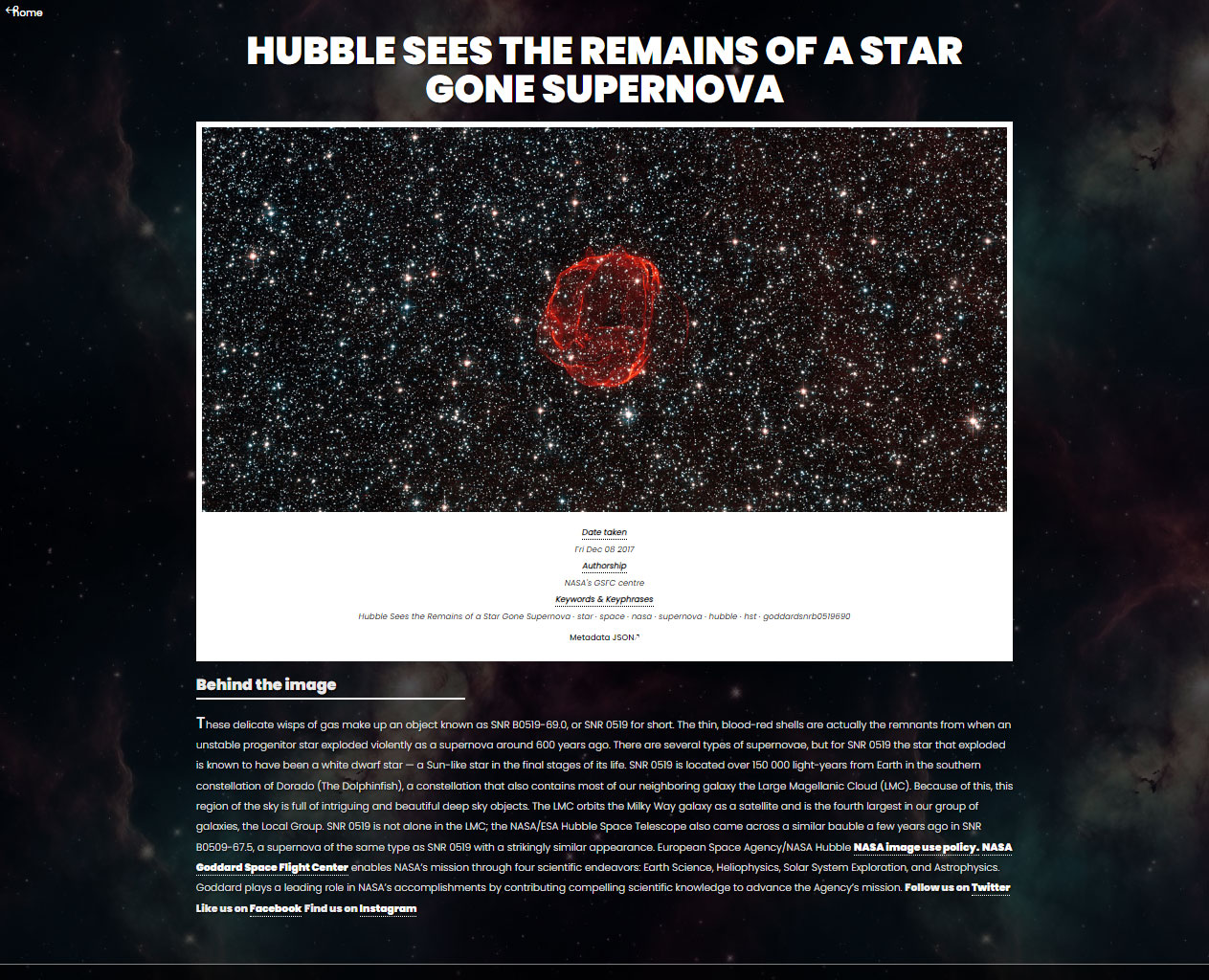

The data source: NASA's public image catalogue, accessible through an API (a service that lets any program query its content automatically). Each catalogue entry includes a title, description, publication date, keywords and a high-resolution image.

The process:

- The program queries the catalogue with a keyword (in this case, "nebula") and receives 94 results

- For each result, it generates an HTML page with the image, description and links to related pages

- It creates a gallery with all the thumbnails

- It generates a search feature that works without JavaScript (filtering directly via HTML)

- It writes the metadata (title, description, image) so Google can index each page individually

All in under 3 seconds. And the important point: by changing the word "nebula" to "mars", "saturn" or "galaxy", the same generator produces an entirely different site with its own pages, images and metadata. One line of configuration, an entire catalogue.

And the key point: the final result is pure HTML with CSS. No framework, no JavaScript in the visitor's browser, no server processing each request. The visitor receives static files and that is it. That is why it is unbeatable fast.

If instead of NASA images the source were a shop's catalogue, a real estate agency's listings or a restaurant's dishes, the result would be the same: a complete website, indexable by Google and hostable on any basic server.

How does it stay up to date?

A static site does not mean an abandoned site. There are several ways to automate regeneration:

- Scheduled task (cronjob): a timer that runs the generator every day, week or hour. If the catalogue has changed, the pages are regenerated. If nothing has changed, the result is identical and there is no cost.

- Webhook (automatic notification): when the data source changes, it sends a signal to the system and the pages are regenerated on demand. It only rebuilds when needed.

- CI/CD pipeline (continuous integration): every time an update is published to the code or data, the system automatically rebuilds the entire site.

None of these options require manual intervention. And if nothing changes on a given day, there is no process.

What it's good for and what it's not

It works well for:

- Product catalogues that already exist in digital format

- Websites with repetitive content: data sheets, listings, directories

- Sites that need Google presence without having to manually create content for each page

- Projects where load speed is a priority

It is not the solution for:

- Applications with real-time interaction (chats, collaborative editors, dashboards)

- Websites with per-user personalised content (behind authentication)

- Projects where content changes every minute

Reflection

The web industry has been spending the last couple of decades convincing us that building a website is a complex problem requiring complex tools. For a fraction of sites, that is true: a banking application, a cloud video editor or a real-time data dashboard justify every byte of JavaScript they load. But for the vast majority of sites (the corporate website, the catalogue, the portfolio, the blog), the fastest web is what it has always been: an HTML document with styles.

This does not mean giving up JavaScript. It means using it as it is, without piling layers and layers of software on top. In the development world, they call it going back to "vanilla JavaScript": writing just the code you need, with no middlemen. If you want an animation in the gallery or a visual effect in the header, you add a few lines of code and the result is smooth. What makes no sense is loading half a megabyte of tools to display what could be displayed with an HTML file.

And the industry is starting to move in that direction. There are libraries weighing less than 15 KB that do jobs previously requiring half-megabyte tools, and real projects that have abandoned heavy tooling to return to lighter solutions. Native browser technologies (the ones already built in, no installation required) have tripled their presence on websites in two years. Alex Russell, Chrome engineer, puts it bluntly: "frontend's hangover from the JavaScript party is gonna suck". What started as a minority current is becoming common sense.

Dressing up a shop window with a few lines of JavaScript is reasonable. Building the entire shop with a structure that weighs more than the merchandise is not.

The data cited in this article comes from the Web Almanac 2024 (almanac.httparchive.org), the HTTP Archive (httparchive.org) and analysis by Alex Russell (infrequently.org). Traffic figures for Wise, Zapier and Nomadlist are Ahrefs estimates.